PostgreSQL and SMR Drives

Introduction

Random disk overwrites on magnetic storage have long been presumed to be essentially the cost of one disk seek. With effective buffering and checkpointing, that can be a tolerable cost.

That cost model is based on the idea of a traditional spinning magnetic disk, where you can seek to a sector and read or write it without affecting anything else on the disk.

Trends indicate that spinning magnetic disks will no longer follow that traditional model, and instead move toward Shingled Magnetic Recording (SMR). SMR is still based on spinning magnetic disks; gaining capacity in exchange for more expensive random overwrites.

PostgreSQL depends heavily on cheap overwrites. That design does not look viable for long, as available magnetic storage trends toward SMR. PostgreSQL needs to adapt the storage architecture to avoid random writes.

Brief Overview of SMR

The fundamental property that SMR is based on is that read heads can be made several times smaller than write heads. Traditional magnetic disks simply make a track the same width as the write head. An SMR makes the tracks the same width as the read head, allowing the drive to pack more tracks on a single platter, increasing the capacity. It does this by writing subsequent tracks that partially overlap the previous track (resembling shingles on a roof), but still leaving enough room for the read head to read the previous track:

Note that reads (random or sequential) and appends are very similar in an SMR or traditional magnetic disk; but as described in the next section, random writes are not.

Random Overwrites on SMR

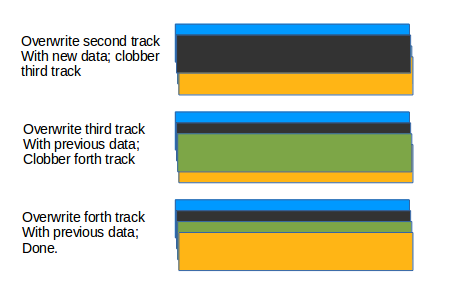

The disadvantage is that it's more expensive to update earlier tracks. For instance, to update the second track, you need a 3-step process:

The second track gets replaced properly, but it requires saving all subsequent tracks, and rewriting them in order.

Naively, you'd need to update all of the tracks from the one you want to update until the end of the drive, which would be far too costly and consume too much memory. Instead, SMR drives are divided into zones (each one on the order of hundreds of MB), and the zone is followed by dead space, so that only the zone needs to be rewritten. Also, it allows the entire zone to just be rewritten in a new location on the drive, to avoid problems if there is a power failure in the middle of an update operation (otherwise the subsequent tracks would need to be saved in some kind of non-volatile memory).

Also, SMR drives may have regions of non-shingled storage for data that needs to be updated more frequently (e.g. metadata).

In any case, applications running on magnetic storage should be prepared for random writes that are much more expensive than on traditional magnetic disks.

PostgreSQL and SMR

PostgreSQL is a general-purpose database that runs in many environments, and can't expect to control whether the data ultimately ends up on SSD, SMR, or traditional magnetic disks. Similarly, if it does end up on an SMR drive, it can't expect to control whether it writes to the shingled or non-shingled region. Traditional magnetic disks will decline in availability and market share rapidly, in favor of SSDs (expensive, low capacity, fast) and SMRs (cheap, high capacity, slow).

Therefore, it's critical that PostgreSQL's storage architecture adapt to work effectively in the shingled area of SMR drives – that is, avoid overwrites.

PostgreSQL and Random Overwrites

PostgreSQL's storage model is heavily based on cheap random block overwrites, even when the data is not logically updated with UPDATE/DELETE.

PD_ALL_VISIBLE

When all the tuples on a page are visible to all other transactions (that is, no transaction holds a snapshot old enough that it doesn't see the current version of any row on the page), PostgreSQL will set PD_ALL_VISIBLE and dirty the page, likely causing a random overwrite. This bit allows PostgreSQL to avoid all visibility tests when scanning the page.

Hint Bits

When the transaction corresponding to the XMIN or XMAX of a tuple completes, and the tuple is read, a tuple header bit is updated and the page is dirtied. These hint bits allow PostgreSQL to avoid expensive transaction status lookups. Typically, many hint bits are set on a page at nearly the same time, so each one doesn't represent an additional write to disk. Even so, hint bits often result in additional random overwrites of a page; for example, after a data load, it generally results in one extra write per page.

Page Freezing

Before transaction wraparound, all of the old transactions in the system must be replaced by FrozenTransactionId or InvalidTransactionId; this process is called "freezing". Transaction IDs are stored in the tuple headers, so replacing the transaction IDs requires changing (and dirtying) the page. This typically results in one extra overwrite for long-lived data.

File Extension

To extend a file or pre-allocate a WAL segment, PostgreSQL writes zero-filled blocks. In the case of data files, this is often not a big problem because file extension is quickly followed by writing the same page with the actual data on it. Typically, the filesystem cache will prevent this from causing two page writes, but that won't always be the case.

WAL Recycling

WAL recycling is explicitly based on the idea that overwrites are cheaper than appending to new files. Newer versions of PostgreSQL can disable this with min_wal_size=0.

Possible Approaches

Do Nothing

PostgreSQL can do nothing, and rely on the underlying filesystem, drivers, or SMR drive itself to effectively emulate random writes. This is a dangerous strategy:

- PostgreSQL is a database, not a "normal" application, so the underlying layers may not be making suitable trade-offs for PostgreSQL. Filesystems and other databases are likely to adapt to some degree, so the expectation will be that PostgreSQL should, as well.

- The emulation of random writes will vary wildly between different systems.

- The emulation of random writes will still have a big cost compared to random writes on a traditional magnetic disk.

Minor Changes

These could be tweaks to the existing storage system without a major overhaul.

Eliminate PD_ALL_VISIBLE

This is a redundant bit (also available in the Visibility Map, or VM) without a lot of benefit. For small tables, there's no need to even read it because the benefit is immeasurable compared with the query overhead. For large table scans, reading the bit from the VM and keeping the current VM page pinned during a scan is no problem. For random bulk updates (e.g. UPDATE...FROM) on tables larger than 1GB that still fit in memory, there would be a modest single-threaded regression because of the cost to pin/unpin the right VM page for the update. But if the goal is to reduce random disk overwrites, this seems like a viable approach.

Use posix_fallocate for Extension

This allows the underlying FS to avoid writing the zero page, and therefore avoid making an update later. Likely effective for WAL, but perhaps also effective for relation extension.

Don't Recycle WAL

Fortunately, in 9.5, users can avoid WAL recycling by setting min_wal_size=0.

Freeze Without Overwrite

There's a proposal here to avoid rewriting pages during a freeze:

http://www.postgresql.org/message-id/51A7553E.5070601@vmware.com

Configurable Free Space Reclamation

Allow configuration to disable the typical space reclamation, and always append. A VACUUM FULL would need to be used to reclaim the dead space.

Major Changes

These could be tweaks to the existing storage system without a major overhaul.

Separate Volatile Data

Tuple headers are much more volatile than the tuples themselves, and much smaller. If the tuple headers are held in a separate physical file, the main tuple data could remain more static during hint bit updates, PD_ALL_VISIBLE updates, freezing, updating, and deleting. Of course, the main tuple data would still need to be cleaned up at some point, but could be deferred much longer (amortizing the cost of rewriting data over more changes).

Log-based Structure

LSM trees and log-based filesystems are designed to avoid random writes. PostgreSQL could use a structure based on those concepts to avoid random writes to the disk.

Notes from Unconference

SMR Drives

Attendees

Add your name here if you want:

Session Leader: Jeff Davis. Notetakers: Josh Berkus

New high-density drives have bigger write than read heads because the read head can be smaller. The drive thus lays each write over multiple read tracks, like shingles. This works fine for append-only operations.

But if you're not appending, you end up with a real problem. You have to clobber multple tracks every time you do a write; your write-in-place clobbers multiple tracks. So a random rewrite has to rewrite a bunch of data. There are "zones" of blank space to prevent this shingle chain from going on forever, like every 256. Random writes are much much more expensive.

Obviously a lot of people use SSDs, but for really large databases (terabytes) this will be a big issue.

Do you believe that all magnetic drives will be made this way soon? Yes, Jeff does.

Will manufatuctures paper over this? There's only so much they can do. Are they going to buffer random writes with SSD and other things? Probably, but it don't be adequate. Postgres has its own write pattern which a layer can't protect it.

Smith said that we've been through this with hybrid drives, and they are counter-performance because of the 2-tier system. If you have more transactions than you have in the buffer, you're just delaying the problem. Hybrid drives give you almost random performance.

Josh pointed out issues with hint bits and freeze. And that there are other types of append-only storage. Jeff brought up other examples.

Postgres has been used to cheap random updates, which is going away.

Jeff went over some of the problems on the wiki page: https://wiki.postgresql.org/wiki/PostgreSQL_and_SMR_Drives

- PD_ALL_VISIBLE

- Hint bits

- Page Freezing

- File extension using zero-filled pages. This is an easier one to fix.

- WAL recycling assumes that overwrites are cheaper than new files

Simon mentioned this is fairly easy to change. Tom pointed out that the reason for WAL recycling had to do with getting a reliable error when we're out of space instead of cheap overwrites. We tried POSIX fallocate, but performance sucked. Maybe it would be better on the new hardware.

Simon asked about the file extension one. It's allocated in 8K blocks. Why do we zero-out the whole file for WAL?

Jeff went over some possible remedial routes.

Manfacturers and OS developers are unlikely to optimize for our workload, so it's not viable to do nothing.

We could do some minor tweaks:

- Eliminate PD_ALL_VISIBLE: this is really redundant to the visibility map in Jeff's opinion.

- use POSIX fallocate for extension for relation extension

- Don't recycle WAL

- Freeze without overwrite: there's a proposal for this already.

- Configurable free space reclaim in tables

For freeze without overwrite, Heikki didn't feel that the prior work would succeed. He was thinking an epoch on the page header would be a lot more successful. He wants someone else to take over the freezing work.

Simon asked about changing the block format as a measure. What about special areas in the page header. Are we ever going to do this? Nobody knows. We need a system which can cope with different types of storage, old and new format pages. If we ever change the page header format, we need to have a plan to upgrade and deprecate formats.

Simon says if we're only changing a couple fields in the page header, then supporting those side-by-side isn't that challenging. Frost said we don't encourage people to play with the page format, because we can't support the change. Hekki said we don't know after upgrading which databases have been upgraded; we need a relminpageversion, or something similar. We need to be able to query this. Some of our indexes already have this in a metapage.

Now that we have an in-place upgrade utility, we have a better idea of what's needed. Let's cut it down to block format upgrade. The changes which Jeff has been discussing seems very doable. We don't want to pile on new page format features and take too much risk.

For 9.5, should we add a pg_control field which says what version we initdb'd at. Maybe we should have a history file for upgrades instead.

Josh asked about indexes. Jeff said that indexes need to be random writes because the index has to be ordered. You can mitigate that with different index structures like BRIN or LSM-Tree. Or maybe you just put your indexes on SSDs.

SMR is liable to be used for large never-delete archive tables.

Amit asked whether we can trust the OS to write things sequentially. Jeff thinks so, and anyway it's not our job to fix that. Users can randomly update tuples. But even for a purely append-only table we do overwrites.

Jeff suggested some more radical changes:

- store metadata and volatile elements in separate extents which might be stored elsewhere.

- Create storage options for log-based structures like LSM-Trees.

Discussion about optimizing indexes ensued.

New SMR drives -- how much bigger? Current commecial models are around 2.5X bigger. 3X as much IO, but 3X as much data.